This is Part 6 in an ongoing series on disk performance. You can read the entire series by starting at the Introduction.

Pop quiz: If you had to configure 4 physical disks to hold data, which of these two options will result in the best performance:

- One RAID 10 array which will hold a single data file.

- Two RAID 1 arrays, each holding a data file that belong to the same filegroup.

I asked this same question recently on SQLServerCentral.com (read the thread here – free registration required). The resulting debate showed me that the answer is not as obvious as it might seem. Recall that in RAID 10 a hardware based controller card handles writing data evenly to all drives (unless you’re using software based RAID, which is beyond the scope of this series). SQL MVP Tony Rogerson did some tests to show that SQL Server will write data evenly to multiple files if they are in the same filegroup. That makes the two RAID 1 drive scenario, in principle, operate in a similar way as RAID 10.

Walks Like RAID 10, Talks Like RAID 10…

To find out which is the better choice I’ll use the results from Test Harness #1 – a Dell PowerEdge 2950 (view the test harness specifics here). I’ll look at the optimal settings first: 64 KB raid stripe (for RAID 10), 64 KB partition offset, and 64 KB allocation unit. On the RAID 10 setup I tested a 128 GB data file using 8 threads with an IO queue depth of 8. On the RAID 1 setup I tested a 64 GB data file using 4 threads with an IO queue depth of 8. That’s half the file size and load I put on RAID 10; my voodoo math reasoning is that since I am using two data files on RAID 1 I can double the IOs/sec and MBs/sec results for the single data file and arrive at something that’s comparable to the RAID 10 load. Note I’m doing it this way because SQLIO can’t measure the load on multiple data files simultaneously. Also, since latency is an average value I’m not doubling it for RAID 1 – instead I’m just doing a direct comparison to RAID 10.

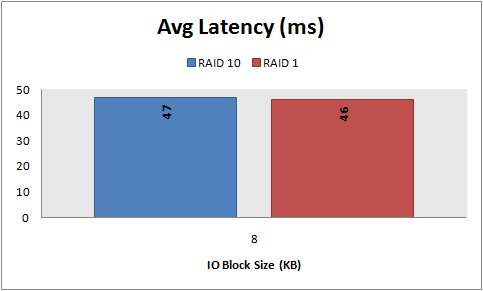

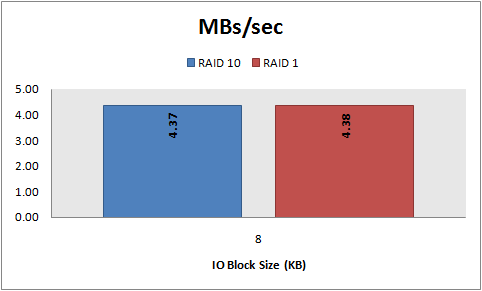

Here’s what 8 KB random reads look like:

And here’s what 8 KB random writes look like:

By the numbers it looks like the two RAID 1 drive scenario not only walks and talks like RAID 10, but behaves just like RAID 10 too.

Can Multiple RAID 1 Drives Really Outperform RAID 10?

I’ll admit, before I knew about using the right partition offset and allocation unit size I set up my servers using multiple RAID 1 drives. My gut feel was that it just seemed to perform better than if the same drives were set up in RAID 10. Let’s say for the sake of argument that you’re in the same situation - using multiple RAID 1 drives built using the defaults and after reading this series you’re thinking you need to rebuild everything as RAID 10. Is it really worth it? To find out, I’ll compare an optimal RAID 10 setup (64 KB stripe, 64 KB offset, and 64 KB allocation unit) against two RAID 1 drives built on the Windows defaults (32 KB offset and 4 KB allocation unit). On the RAID 10 I’ll use a 128 GB data file with 8 threads and an IO queue depth of 8. For RAID 1 I’ll use a 64 GB data file with 4 threads and an IO queue depth of 8, doubling the IOs/sec and MBs/sec to simulate the same load as the RAID 10 tests.

Here’s how 8 KB random reads look:

And here’s how 8 KB random writes look:

Once again the two RAID 1 drive scenario doesn’t look so bad. Obviously that’s not a very compelling argument to reconfigure your existing RAID 1 drives as RAID 10!

Conclusion

The point of these comparisons is to show that, in the most simplistic way, if you are already using multiple RAID 1 drives which hold data files in the same filegroup you’re not as bad off performance-wise as you may think. That said, if you’re setting up a server from scratch I’d recommend RAID 10 over multiple RAID 1’s for two reasons:

- Today’s hardware based RAID controllers are pretty sophisticated and designed for reading from and writing data to disks in the most efficient way possible. By comparison, SQL Server’s proportional fill algorithm but doesn’t understand much about the physical disks on which files reside, nor does SQL Server employ the hardware based caching techniques that are built into controllers (and which were not factored into any of these tests).

- Towards the end of Tony Rogerson’s post he mentions that SQL will only round robin writes if there is equal free space in each file in the filegroup. As soon as the free space is different you’ll have one set of disks taking more IO than the other. That won’t happen with a single data file on a RAID 10 disk.

Next, In Part 7 I will compare a PowerVault 220S with a PowerVault MD1000.

6 comments

Nice review. Thank you.

Excellent, is very interesting. Good job.

Very interesting info - and I assume its also fairly accurate. BUT I don't see the point of the PHYSICAL test that you performed. If the point to compare a single RAID 10 disk to 2 RAID 1 disks, you have to find a way to do just that.

Testing a single RAID 1 disk and doubling the performance (or using a data file that is half the size) doesn't prove anything.

Again - I think you conclusions are correct, but I don't see the point in running the physical test when you are "calculating" your results anyway.

Wow, great benchmarks you got there. I would like to know, if you had 6 disks, setup as 3 RAID1 sets (2 disks each) to divide data, log, and tempdb, would you choose that one over a RAID 10?

To previous anonymous post -

The one factor that would need to be compared is the concurrency of reads/writes. If you're going to have the data/log/tempdb on a single set of synchronized spindles, they will be forced to serialize access to the whole unit.

On the other hand, f you had three logical units in a RAID1 configuration, you will be able to have up to three concurrent accesses.

This does disregard the transfer and access rate differences between a single RAID1 and a RAID10, so it's possible it would come out a wash.

In a worst case, you could have SQL code selecting from tempdb, inserting into a data file, and logging for the inserts flowing into the transaction log. And this could all take place in a single SQL command.

So which would you rather have in this scenario? Concurrent access to 3 RAID1's or a single wide RAID10? I'm betting on the former.

BTW - Great blog Kendal!

-Graham Tapscott

Lovely blog yyou have

Post a Comment